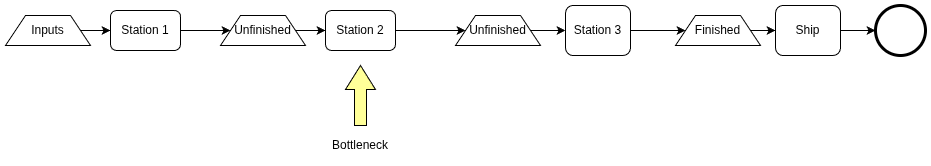

According to the Theory of Constraints (ToC), every system has exactly one bottleneck that determines the throughput of the overall system. ToC originated with manufacturing, but its results have been observed to be quite general.

In the diagram above, the whole process cannot deliver any faster than Station 2 is able to transform its buffer stock of unfinished inventory into its own output. Suppose you make Station 3 run ten times faster than it did last week, leaving everything else the same. The overall process throughput won’t change. All that will happen is for Station 3 to be starved of inputs and forced to idle part of its time.

Suppose instead you invest in the throughput of Station 1, increasing it by ten times while again leaving everything else unchanged. The overall process throughput still won’t change. Station 1 will just overproduce and build up inventory of unfinished work waiting for Station 2. In some ways this is even worse, because inventory has a carry cost. Not only that, but the bigger the piles of unfinished inventory, the longer it takes something new to get through the whole process, since the new piece has to wait in those queues of unfinished work.

The only place you want to invest in capacity improvement is at the bottleneck. Increase capacity at Station 2 by ten times and you should get ten times the throughput. Why do I say you “should get ten times the throughput” instead of “you will get ten times the throughput?” Two things can go wrong. Station 1 might not be able to produce enough to keep Station 2 busy at its new higher capacity. That would indicate Station 1 is now the new bottleneck. Or Station 3 might not be able to consume enough of Station 2’s outputs, causing an inventory buildup. That would mean Station 3 is the new bottleneck.

Manufacturing Software

Writing code is not making car parts. Even so, it has proven useful to look at the overall system of work in terms of its throughput, cycle time, and failure rate. In an organization that creates software, the inventory looks like spreadsheets, Jira tickets, Git commits, and undeployed pull requests. The work stations are product managers, designers, developers, merge queues, build pipelines, and pre-prod environments.

“Throughput” here means how many features you deliver in a given time frame. If most of your features are valued-producing then higher throughput means higher profits.

So what happens when you adopt AI driven development? The short version is that you’ve increased the capacity of Station 2. Does that mean you’ve increased the overall throughput? Maybe, maybe not. You might just be overproducing inventory.

Github has recently blamed their poor availability on “acceleration in agentic development workflow”. That sounds like a machine breaking.

Your build pipeline may be breaking too. Is your CI machinery keeping up with the increased PR volume? Reviewing pull requests, running builds, tests, security scans… these all consume the capacity in the later stages of your delivery process. Watch out for increasing queue depth there! That’s unfinished inventory building up and it would mean the bottleneck is not coding but validation and verification (V&V).

You might also see an increased number of deployments into your production environment. This is good, mostly. If your services are configured to hard kill on deployments then every deployment means failed requests for some number of users. Maybe that’s tolerable at a low deployment frequency. But keep in mind that a user request fails if any of the services along its call tree break. A microservice soup frothing with deployments will feel 88% available instead of 99%.

Double Bind

It’s a cruel irony: our best way to catch AI mistakes is to increase V&V work, but that slows down the new bottleneck stage. We need to increase the capacity of build systems to match.

- If you depend on external vendors during build and deploy, you need to monitor them carefully to see when its time to pull those dependencies in house.

- Platform teams are usually understaffed and their tools undersized. Keep an eye on your artifact and container repositories.

- SAST and DAST capacity are going to be very tough to increase since their output still often requires human review.

- We typically instruct AI agents to write unit tests but we don’t tell them to measure test execution time or consolidate tests. As a result, test times have a way of creeping upward. Consider replacing comprehensive unit tests with acceptance tests.

Converging Parallel Workstreams

So far I’ve mostly talked about a single flow through a single process. In reality, each team is like their own production line. Those lines all converge at a big integration step. Defects creep in at the interfaces between services, so integration is where many defects are uncovered. Great! We want our build process to catch those defects and reject changes that would break the system. However, each rejected build means work goes back upstream. Coding is not the bottleneck any more, so we shouldn’t worry about adding to the load on the coding agents. However, once an agent fixes a defect it pushes another change forward into V&V. This added load is called “failure demand.”

Another lesson from ToC is to do more work upstream to avoid putting defective pieces into the bottleneck. Does that create a paradox? How do I avoid putting defective work into the stage that detects the defects?

One way is through more cross-service quality checking during code creation. Local builds run by agents should catch more of the integration defects. Perhaps via property-based testing. Maybe we move to strongly typed API specifications outside of the service code, taking a lesson from the days of IDLs.

Summing Up

Our architecture and processes evolved for human-driven coding rates. Code production is no longer the bottleneck, if it ever was. Right now, it looks like the next bottleneck will be version control, build, test, and deployment systems. The lag time between “code commit” and “running in production” has gotten longer and longer as we’ve added more automated checks… and we need to add even more to catch agentic errors. Our build systems aren’t prepared for the volume of work. We need to elevate the capacity of those processes and simultaneously offload work to upstream stages where possible. It’s OK to generate more code if it reduces failure demand or validation time in the build pipeline.